Posted on February 25, 2004

Introduction

Early supercomputers used parallel processing and distributed computing and to link processors together in a single machine. Using freely available tools, it is possible to do the same today using inexpensive PCs - a cluster. Glen Gardner liked the idea, so he built himself a massively parallel Mini-ITX cluster using 12 x 800Mhz nodes.

The machine runs FreeBSD 4.8, and MPICH 1.2.5.2. After working with his machine and running some basic tests, Glen's cluster looks to be equivalent to at least 4 (maybe 6) 2.4Ghz Pentium IV boxes in parallel on a similar network - achieving a performance of around 3.6 GFLP. With the exception of the metalwork, power wiring, and power/reset switching, everything is off the shelf. Rather impressive we'd say - though he *is* root on a 1.1 TFLP 528 CPU monster, the 106th fastest computer in the world...

The "Mini-Cluster"

I built a Mini-ITX based massively parallel cluster named PROTEUS. I have 12 nodes using VIA EPIA V8000, 800 MHz motherboards. The little machine is running FreeBSD 4.8, and MPICH 1.2.5.2. Troubles installing and configuring Free BSD and MPICH were few. In fact, there were no major issues with either FreeBSD or MPICH.

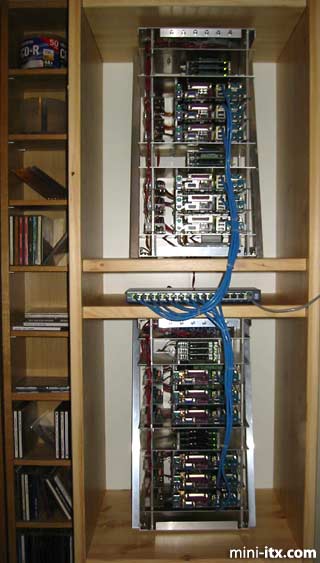

The construction is simple and inexpensive. The motherboards were stacked using threaded aluminum standoffs and then mounted on aluminum plates. Two stacks of three motherboards were assembled into each rack. Diagonal stiffeners were fabricated from aluminum angle stock to reduce flexing of the rack assembly.

The controlling node has a 160 GB ATA-133 HDD, and the computational nodes use 340 MB IBM microdrives in compact flash to IDE adapters. For file I/O, the computational nodes mount a partition on the controlling node's hard drive by means of a network file system mount point.

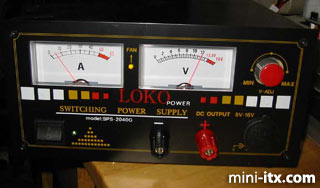

Each motherboard is powered by a Morex DC-DC converter, and the entire cluster is powered by a rather large 12V DC switching power supply.

With the exception of the metalwork, power wiring, and power/reset switching, everything is off the shelf.

|

The original 6 node configuration.

|

The completed 12 node cluster.

|

This image shows the power use (60 watts) at idle for 6 nodes.

At present, the idle power consumption is about 140 Watts (for 12 nodes) with peaks estimated at around 200 Watts. The machine runs cool and quiet. The controlling node has 256 MB RAM , and an 160 GB ATA 133 IDE hard disk drive. The computational nodes have 256 MB RAM, each and boot from 340 MB IBM microdrives by means of compact flash to IDE adapters. The computational nodes mount /usr on the controlling node via NFS, for storage and to allow for a very simple configuration. No official benchmarks have been run, but for simple computational tasks the mini cluster appears to be faster than four 2.4 GHz pentium 4 mcahines used in parallel, at a fraction of the cost and power use.

Power and Cooling

Mini-ITX boards have very low power dissipation as compared to most motherboard/cpu combination in popular use today. This means that a Mini-ITX cluster with as many as 16 nodes won't need special air conditioning. Low power dissipation also means low power use, so you can use a single inexpensive UPS to provide clean AC power for the nodes.

In contrast, a 12-16 node cluster built with Intel or AMD processors will generate enough heat that you will likely need heavy duty air conditioning. Additionally, you will need adequate electrical power to deliver the 2-3 kilowatts peak load that your 12 node PC cluster will require. Plan on having higher than average utility bills if you use PC's...

Hardware Construction

The cluster is built in two nearly identical racks. Each rack has two stacks of three motherboards and dc-dc converters mounted on aluminum standoffs.

|

The compact flash adapters used to mount the microdrives are also in stacks of three. Each stack of boards is mounted on a 7 inch by 10 inch 0.0625 thick 6061-T6 aluminum plate as are the microdrive stacks. There are seven metal plates in all, in each rack.

|

The top cover plate has the mounting bracket for the 6 on/off/reset switches.

|

The plate below it is home to the power distribution terminal block. The power delivery cable for each rack is heavy duty 14 gauge stranded wire with pvc insulation. The power cabling from the terminal strip to each of the dc-dc converters is 18 gauge stranded pvc insulated hookup wire. The wiring for the power/reset switches is 24 gauge stranded, pvc insulated wire.

|

|

|

Quick Links

Mailing Lists:

Mini-ITX Store

Projects:

Show Random

Accordion-ITX

Aircraft Carrier

Ambulator 1

AMD Case

Ammo Box

Ammo Tux

AmmoLAN

amPC

Animal SNES

Atari 800 ITX

Attache Server

Aunt Hagar's Mini-ITX

Bantam PC

BBC ITX B

Bender PC

Biscuit Tin PC

Blue Plate

BlueBox

BMW PC

Borg Appliance

Briefcase PC

Bubbacomp

C1541 Disk Drive

C64 @ 933MHz

CardboardCube

CAUV 2008

CBM ITX-64

Coelacanth-PC

Cool Cube

Deco Box

Devilcat

DOS Head Unit

Dreamcast PC

E.T.PC

Eden VAX

EdenStation IPX

Encyclomedia

Falcon-ITX

Florian

Frame

FS-RouterSwitch

G4 Cube PC

GasCan PC

Gingerbread

Gramaphone-ITX-HD

GTA-PC

Guitar PC

Guitar Workstation

Gumball PC

Hirschmann

HTPC

HTPC2

Humidor 64

Humidor CL

Humidor II

Humidor M

Humidor PC

Humidor V

I.C.E. Unit

i64XBOX

i-EPIA

iGrill

ITX Helmet

ITX TV

ITX-Laptop

Jeannie

Jukebox ITX

KiSA 444

K'nex ITX

Leela PC

Lego 0933 PC

Legobox

Log Cabin PC

Lunchbox PC

Mac-ITX

Manga Doll

Mantle Radio

Mediabox

Mega-ITX

Micro TV

Mini Falcon

Mini Mesh Box

Mini-Cluster

Mobile-BlackBox

Moo Cow Moo

Mr OMNI

NAS4Free

NESPC

OpenELEC

Osh Kosh

Pet ITX

Pictureframe PC

Playstation 2 PC

Playstation PC

Project NFF

PSU PC

Quiet Cubid

R2D2PC

Racing The Light

RadioSphere

Restomod TV

Robotica 2003

Rundfunker

SaturnPC

S-CUBE

SEGA-ITX

SpaceCase

SpacePanel

Spartan Bluebird

Spider Case

Supra-Server

Teddybear

Telefunken 2003

TERA-ITX

The Clock

ToAsTOr

Tortoise Beetle

Tux Server

Underwood No.5

Waffle Iron PC

Windows XP Box

Wraith SE/30

XBMC-ION